Table of Contents

AI contestability is becoming one of the most important ideas in responsible AI, especially when automated systems affect people’s access to jobs, credit, healthcare, public services, or legal outcomes. The reason is simple: if a system can influence a high-stakes decision, people need more than a technical explanation of how it works. They need a meaningful way to question, challenge, and potentially reverse harmful outcomes. Research in AI ethics and governance increasingly treats contestability as a safeguard against fallible, unjust, or unaccountable automated decision-making, and not just as a cosmetic add-on to explainability.

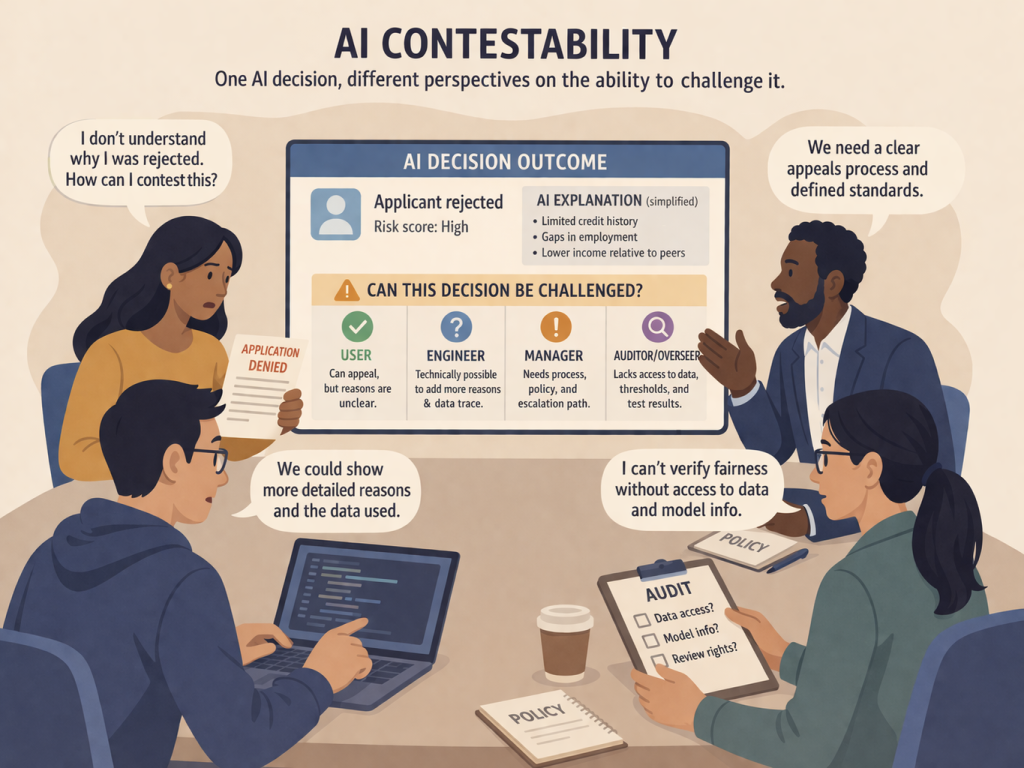

This makes AI contestability a stronger and more useful concept than many popular discussions of explainability. Explainability asks whether a system can provide reasons or descriptions for an output. Contestability goes further: it asks whether those affected by a decision, or those responsible for overseeing the system, have the information, authority, and process needed to challenge that decision in practice. That difference matters because an explanation without recourse may still leave people powerless.

Why AI contestability matters

The push toward AI contestability did not emerge by accident. Scholars have argued that as automated decision-making expands, harmful outcomes cannot be addressed only after deployment through vague promises of human oversight. A design framework published in AI and Ethics defines contestable AI systems as systems that are open and responsive to human intervention throughout the lifecycle, including design, development, and post-deployment use. That same framework argues that contestability should not be limited to a post-hoc appeal after the system has already acted; it should shape the system from the beginning.

This matters because automated systems are not only technical tools. They are sociotechnical systems embedded in institutions, rules, workflows, and power relationships. The accountability literature makes a similar point: AI governance cannot be reduced to one metric or one explanation method, because accountability itself is multifaceted and context-dependent. One recent analysis defines AI accountability in terms of answerability and identifies conditions such as authority recognition, interrogation, and limitation of power as necessary to make accountability meaningful. In other words, if nobody can ask questions, nobody has to answer them.

That is why AI contestability matters so much in high-stakes settings. If an AI-assisted hiring system filters candidates unfairly, a loan model denies credit, or a medical AI contributes to a flawed recommendation, the ethical issue is not exhausted by showing a saliency map, a feature weight, or a confidence score. The deeper question is whether the decision can be challenged by someone with enough standing, information, and institutional pathway to make that challenge matter.

Explainability helps, but AI contestability asks for more

One of the clearest findings in the literature is that explanation and contestation are related but not identical. In AI & Society, Henin and Le Métayer argue that explainability is useful but not sufficient for the legitimacy of algorithmic decision systems. Their core claim is that high-stakes systems require justifiability and contestability, not explanation alone. They distinguish these ideas carefully: an explanation helps a person understand, while a justification aims to show that the decision is good or appropriate according to some independent norm.

That distinction is powerful because many debates about responsible AI still assume that once a model becomes explainable, the ethical problem has largely been solved. But explanations can fail in several ways. They can be too technical, too abstract, too narrow, or too disconnected from the norm that actually matters in the case. A person denied a benefit may not just want to know how the system arrived at a result. They may want to know whether the decision was justified, what standard it was measured against, and how to argue that the standard was applied incorrectly.

Research on AI diagnostics in medicine strengthens this point from a different angle. In Artificial Intelligence in Medicine, Ploug and Holm argue that, from a patient-centric perspective, explainability is best understood as effective contestability. Their paper identifies four domains of information needed for meaningful contestation in diagnostic AI: how data is used, what biases may exist, how the system performs, and how labor is divided between the AI system and healthcare professionals. That is a much richer requirement than “the system should provide an explanation.”

So AI contestability does not reject explainability. It places explainability in a larger structure. Explanations are useful when they help someone understand enough to raise a specific challenge. But without procedures, norms, review channels, and the possibility of response, explanations alone can become informational theatre rather than genuine recourse.

AI contestability depends on power, timing, and institutional design

A strong theme across the research is that AI contestability is not only about users or decision subjects. It also concerns operators, reviewers, and institutions. A 2025 article in AI and Ethics introduces the concept of operator contestability: the principle that those overseeing AI systems must have the necessary control to be accountable for decisions made with those systems. The paper argues that designers have a duty to ensure operators possess not only epistemic access to relevant reasons, but also the authority and cognitive capacity to act on them.

This is an important advance because many real-world harms happen even when a human is “in the loop.” A nominal human reviewer may still be unable to challenge a model meaningfully if the workflow is rushed, the interface is opaque, the organization discourages intervention, or the human lacks tools to question the system’s assumptions. The operator contestability paper explicitly argues that accountability weakens when operators do not have the tools or authority to uncover and act on problems such as bias or invalid conclusions.

Timing matters too. The contestability-by-design framework argues that contestation should exist ex ante as well as post hoc. Ex-ante contestability means organizations should create room to challenge data choices, modeling assumptions, and design decisions before the system harms anyone in production. Post-hoc contestability means that once a decision is made, people still need procedures to contest it. This lifecycle view is one reason contestability is more demanding than standard XAI: it treats challengeability as a property of the whole system, not just its final output.

This also connects directly to accountability. The accountability literature stresses that governance depends on context, forum, process, standards, and implications. In plain terms, a meaningful challenge requires answers to practical questions: Who hears the complaint? By what standard is the decision reviewed? What evidence counts? What happens if the challenge succeeds? Without those elements, contestation remains symbolic rather than effective.

What AI contestability by design looks like in practice

One reason AI contestability is such a useful topic is that it produces more concrete design requirements than abstract ethical slogans. The TU Delft contestability framework synthesizes the literature into five system features and six development practices. The five features are built-in safeguards against harmful behavior, interactive control over automated decisions, explanations of system behavior, human review and intervention requests, and tools for scrutiny by subjects or third parties. The six practices are ex-ante safeguards, agonistic approaches to ML development, quality assurance during development, quality assurance after deployment, risk mitigation strategies, and third-party oversight.

This is useful for article readers because it turns a vague ethical idea into something operational. A contestable system is not just one with a help page or a confidence score. It is one that anticipates disagreement and designs for it. It expects that stakeholders may challenge data inputs, decision rules, outputs, or institutional application, and it builds channels for those challenges into the system and its surrounding process.

The healthcare example makes this concrete. Ploug and Holm argue that effective contestability in AI diagnostics requires access to information about data use, bias, system accuracy, and the role of the AI relative to clinicians. Those requirements suggest a broader principle: people cannot meaningfully contest what they are not allowed to see. But at the same time, simply releasing more technical detail does not automatically create effective contestability. The information has to be relevant to the specific challenge being raised.

There is also an empowerment dimension here. A Springer paper on contesting and justifying algorithmic decisions argues that contestation is a key requirement in high-impact systems because it empowers human decision-makers rather than making them passive recipients of algorithmic output. That framing is especially valuable for Noetrion’s broader theme of systems and responsibility: good systems do not just automate decisions efficiently; they preserve room for judgment, critique, and revision.

Why AI contestability is likely to become more important, not less

As AI systems become more deeply integrated into hiring, education, finance, welfare administration, diagnostics, and security, the practical need for AI contestability will only grow. The research record already points in that direction. AI ethics guidelines have increasingly recognized the ability to contest as a safeguard, and scholars have argued that there is still too little design guidance for making contestation usable in practice. More recent work continues to push the field from static explanation toward dynamic challenge, revision, and procedural recourse.

That trajectory makes sense. A mature AI governance culture cannot stop at “the system produced an explanation.” It has to ask whether the explanation is linked to standards, review, power, and remedies. It has to ask whether the people affected can do anything with the information they receive. And it has to ask whether institutions are willing to design for disagreement rather than treating model outputs as final. That is the real promise of AI contestability: not merely more transparency, but a more accountable relationship between automated systems and the people who live with their consequences.

You might also like

AI Transparency Is Harder Than It Sounds: Beyond Explainability Alone

AI Ethics: Why It Is More Than Fairness, Bias, and Good Intentions

Sources :

- AI & Society – Beyond explainability: justifiability and contestability of algorithmic decision systems more

- AI and Ethics – Contestable AI by Design: Towards a Framework more

- AI & Society – Accountability in artificial intelligence: what it is and how it works more

- Artificial Intelligence in Medicine – The four dimensions of contestable AI diagnostics more

- AI and Ethics – Design for operator contestability: control over autonomous systems by introducing defeaters more

- AI and Ethics – A framework to contest and justify algorithmic decisions more

Pingback: Human Oversight in AI: 5 Critical Reasons a Human in the Loop Is Not Enough - Noetrion