Table of Contents

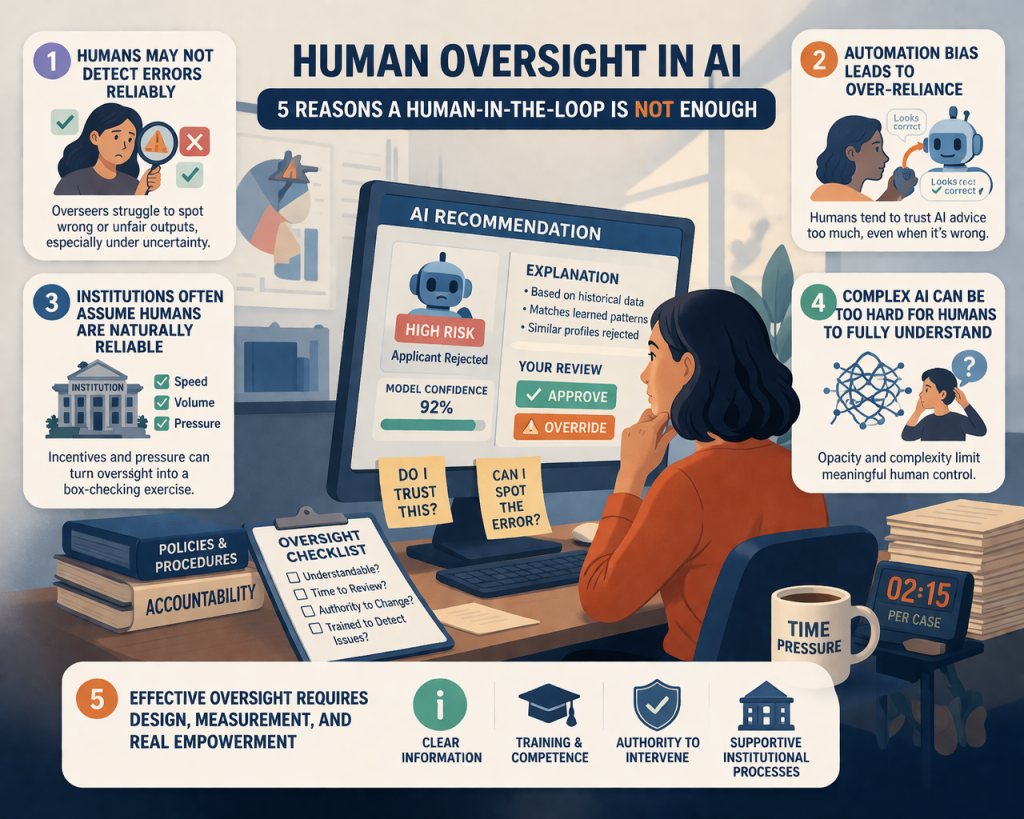

Human oversight in AI is often presented as the practical answer to the risks of automated decision-making. If an AI system is involved in hiring, healthcare, credit, or public administration, the common reassurance is simple: a human will still be there to supervise the outcome. That promise sounds reasonable, and it appears repeatedly in AI governance discussions because human oversight is supposed to improve safety, uphold values, and preserve accountability. But recent research makes an important point: the existence of human oversight does not by itself guarantee that oversight will be effective. In fact, human overseers may lack the competence, time, authority, or incentives needed to detect and correct harmful outputs.

That is why the real question is not whether a human appears somewhere in the loop. The real question is whether that person can meaningfully understand, evaluate, and challenge the system’s behavior. This distinction matters because many AI deployments still treat human oversight as a checkbox rather than a real design requirement. Research across law, ethics, and human-AI interaction increasingly argues that effective oversight depends on much more than nominal human presence. It depends on whether humans can detect errors, resist overreliance, intervene in time, and operate within institutions that actually support scrutiny rather than assume it.

1. Human oversight in AI fails when humans cannot reliably detect errors

The first problem with human oversight in AI is deceptively simple: oversight only works if humans can actually recognize when the system is wrong. A recent paper in Minds and Machines argues that reliable error detection is a crucial condition for effective oversight and proposes Signal Detection Theory as a framework for understanding when humans can, and cannot, distinguish correct from incorrect or unfair AI outputs. The paper emphasizes that oversight is meaningful only if overseers can detect errors and intervene, not merely observe system outputs passively.

This sounds obvious, but it is harder in practice than it appears. In many real settings, AI outputs do not arrive with an obvious label saying “wrong” or “unfair.” They arrive as plausibly formatted recommendations, scores, classifications, or alerts. A person asked to oversee them may need to evaluate them under uncertainty, time pressure, and incomplete context. The same Minds and Machines paper notes that task-related, system-related, and person-related factors all affect a human overseer’s sensitivity to errors and their tendency to flag problems. In other words, oversight is not just a matter of assigning responsibility. It is also a matter of human perceptual and judgment limits.

This immediately weakens the simplistic “human-in-the-loop” story. A human reviewer who cannot reliably identify a bad output is not providing a strong safeguard. They may create the appearance of accountability while failing to reduce the underlying risk. That is one reason recent oversight research focuses less on the mere existence of review and more on the conditions under which review actually works.

2. Human oversight in AI is vulnerable to automation bias

The second reason human oversight in AI is not enough is that humans do not respond neutrally to machine advice. A growing body of research examines automation bias —the tendency to over-rely on automated suggestions or to use them as shortcuts instead of engaging in careful independent judgment. A 2024 open-access article in Government Information Quarterly explicitly argues that the legal assumption that human decisions naturally safeguard fairness and quality does not hold up once automation bias is considered. Its abstract describes automation bias as excessive reliance on machine-generated proposals that can reduce meaningful engagement and deteriorate overall decision quality.

This problem is not purely theoretical. A 2023 paper in the journal Artificial Intelligence experimentally studied whether explanations reduce automation bias. The results were mixed in a revealing way: explanations often improved decision accuracy and reduced completion time, but they did not reduce automation bias and in some cases even increased it. The paper’s abstract and summary show that users were more likely to align with erroneous AI advice when advice was present, and that giving explanations did not reliably solve the overreliance problem.

That finding matters because it challenges one of the most common assumptions in responsible AI discourse. Many practitioners assume that if we combine human oversight with explainability, we have largely solved the accountability problem. But the evidence suggests otherwise. Human reviewers can still defer too quickly to AI outputs even when explanations are available. So if oversight is designed around the idea that explanations automatically make humans better reviewers, it may fail precisely when the stakes are highest.

3. Human oversight in AI breaks down when institutions assume humans are naturally reliable

A third problem is institutional. Human oversight in AI is often treated as if humans are inherently trustworthy reviewers. But research in AI & Society argues that this assumption is too optimistic. The article “Institutionalised distrust and human oversight of artificial intelligence” notes that human overseers are expected to improve accuracy and safety, uphold values, and build trust in AI, yet empirical research suggests humans are not always reliable in fulfilling oversight tasks. They may lack competence, or they may be affected by harmful incentives. The paper therefore proposes six normative principles aimed at designing institutions that anticipate the fallibility of overseers rather than simply trusting them by default.

This is a deeper point than it first appears. Oversight does not happen in a vacuum. It happens inside organizations with deadlines, hierarchies, incentives, and political pressures. A manager reviewing AI-assisted decisions may not have enough time to challenge them. A clinician may not want to contradict a system that is framed as highly accurate. A public servant may be pressured to process cases quickly rather than examine them critically. In those contexts, “human oversight” can become symbolic rather than substantive. The institution has a human reviewer on record, but the design of the surrounding environment does not support real scrutiny.

This is why the AI & Society paper’s idea of institutionalised distrust is so important. It suggests that responsible governance should not rely on the assumption that overseers will always act optimally. Instead, oversight mechanisms should be built to account for human fallibility the way democratic institutions account for the fallibility of officials: through checks, procedures, role clarity, and safeguards against blind trust.

4. Human oversight in AI is harder when systems become too complex to understand

A fourth reason human oversight in AI is not enough is that many AI systems are becoming more complex, more opaque, and more autonomous than the human review structures around them. A 2024 editorial in New Biotechnology directly raises the question of whether human oversight is still possible as AI systems become increasingly complex. Its abstract says that contemporary architectures, including large neural networks and generative AI applications, can undermine human understanding and decision-making capabilities, and it concludes that complete oversight may no longer be viable in some contexts, even if strategic interventions can still preserve accountability and safety.

This is not an argument against oversight. It is an argument against naive oversight. If a system is too opaque or too fast-moving for the assigned human reviewer to meaningfully understand, then human presence alone does not reintroduce control. At that point, the role of the human may drift toward rubber-stamping, exception handling, or after-the-fact responsibility without real comprehension. The oversight becomes nominal while the practical control remains with the system and the institutional process around it.

The same theme appears in work on Meaningful Human Control. A 2024 paper in AI and Ethics proposes three measurable components of meaningful human control — subjective, normative, and moral control — and shows experimentally that different designs of human-AI teaming produce different levels of human engagement and ethical control. In their pandemic triage experiment, participants reported more engagement and control when the AI advised or supported them than when the AI acted autonomously. This suggests that “a human somewhere nearby” is too weak a standard; the design of task allocation and decision authority materially changes whether control is meaningful at all.

5. Human oversight in AI works only when oversight is designed, measured, and empowered

If the previous sections sound pessimistic, the important corrective is this: the literature does not say that human oversight is useless. It says that badly designed oversight is weak. Effective oversight requires deliberate design. The Minds and Machines article argues that oversight effectiveness depends on humans being able to detect inaccuracies and unfairness under uncertainty, which means designers must think about factors that shape sensitivity and response bias. The AI and Ethics paper on meaningful human control shows that design choices about authority and autonomy influence whether humans feel engaged, exercise control, and remain ethically responsive. The AI & Society article on institutionalised distrust argues that institutions should be built around the expectation that overseers are fallible, not idealized.

Put differently, effective human oversight in AI depends on at least four conditions. First, the human must have enough information to evaluate outputs meaningfully. Second, they must have enough competence and time to do so. Third, they must have actual authority to intervene, not just ceremonial responsibility. Fourth, the surrounding organization must support oversight through process and incentives instead of undermining it through speed, opacity, or deference to automation. These conditions are consistent across the oversight literature even when the authors approach the issue from different disciplines.

This is also why the strongest future path for responsible AI is not “human-in-the-loop” as a slogan, but human oversight by design. That means measuring oversight quality, designing interfaces that support real error detection, anticipating automation bias, and creating institutional pathways for escalation and challenge. A system with genuine oversight should make it easier for humans to think critically, not easier for them to accept machine output without reflection.

What this means for responsible AI in practice

For practitioners, the lesson is straightforward but demanding. If an organization claims to use human oversight in AI, it should be able to answer practical questions: Who is responsible for reviewing outputs? What kinds of errors are they expected to catch? How are they trained? Under what conditions can they override the system? What incentives encourage scrutiny rather than speed? And how is oversight performance itself evaluated? The literature suggests that without answers to questions like these, “human oversight” risks becoming a governance label rather than a functioning safety mechanism.

That makes this topic especially relevant to the broader Noetrion arc. It connects directly to your earlier articles on AI ethics, AI transparency, AI bias, and AI contestability. Transparency matters because overseers need to understand enough to review. Contestability matters because people need meaningful ways to challenge outcomes. Bias matters because overseers must detect unfairness, not just technical errors. And ethics matters because the question is not merely whether a system performs, but whether responsibility remains legible when humans and machines share decision-making.

You might also like

AI Transparency Is Harder Than It Sounds: Beyond Explainability Alone

AI Ethics: Why It Is More Than Fairness, Bias, and Good Intentions

AI Contestability: Why Relying on Explanations Alone Is a Dangerous Risk for High‑Stakes Decisions

sources

Gunakan link biasa di WordPress pada frasa-frasa kontekstual di body, lalu boleh juga taruh daftar sumber di bawah:

- AI & SOCIETY – Institutionalised distrust and human oversight of artificial intelligence more

- Minds and Machines – Effective Human Oversight of AI-Based Systems: A Signal Detection Perspective more

- Government Information Quarterly – Automation bias in public administration more

- Artificial Intelligence – The effects of explanations on automation bias more

- AI and Ethics – Measuring meaningful human control in human–AI teaming more

- New Biotechnology – Is human oversight to AI systems still possible? more

Pingback: What Is Agentic AI? A Clear Beginner-to-Researcher Guide